BRAIN WAVES IN THE SERVICE OF SCIENCE

Studies for immobilized or paralyzed patients take on new dimensions day by day. A program moving virtual robotic arms on computer screen via brain waves was designed at Izmir University of Economics (IUE). Assoc. Prof. Dr. Yuriy Mishchenko, Lecturer at IUE Department of Biomedical Engineering, developed a robotic hardware that would enable movements of patients in real life with his TUBITAK funded project.

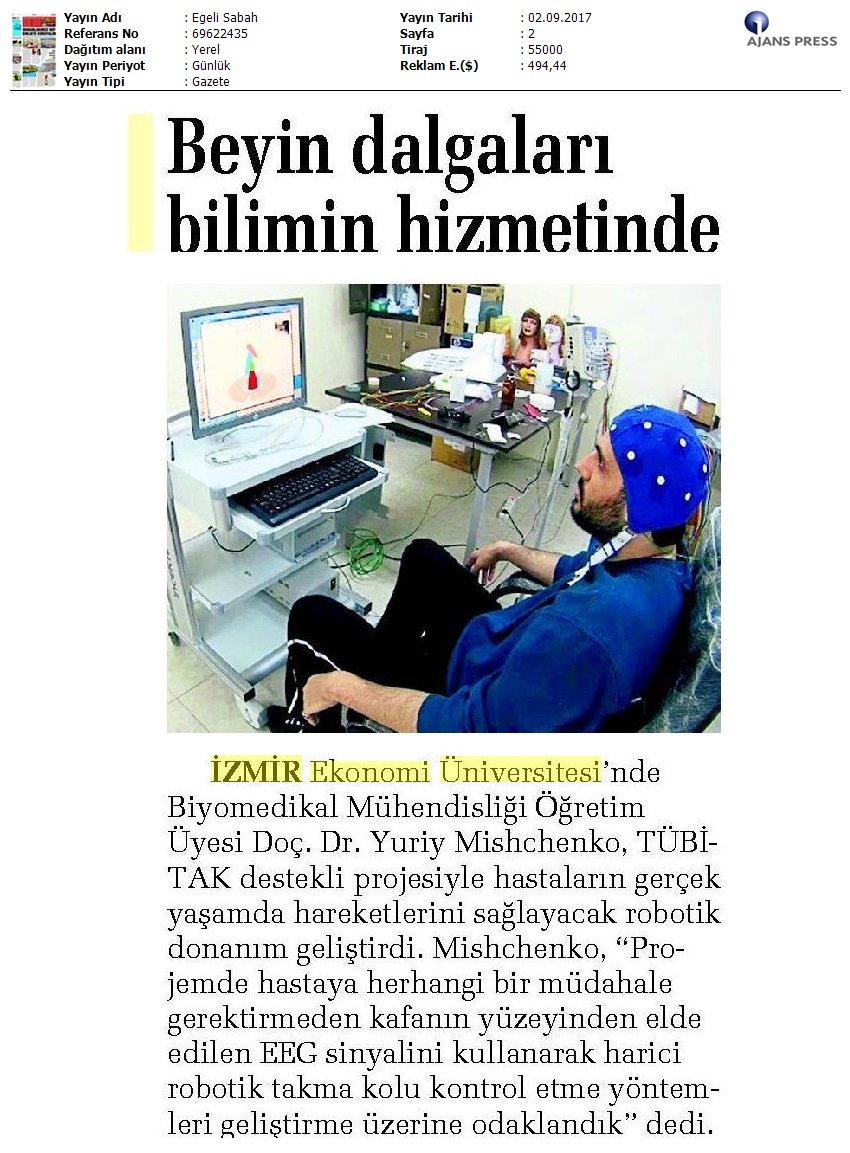

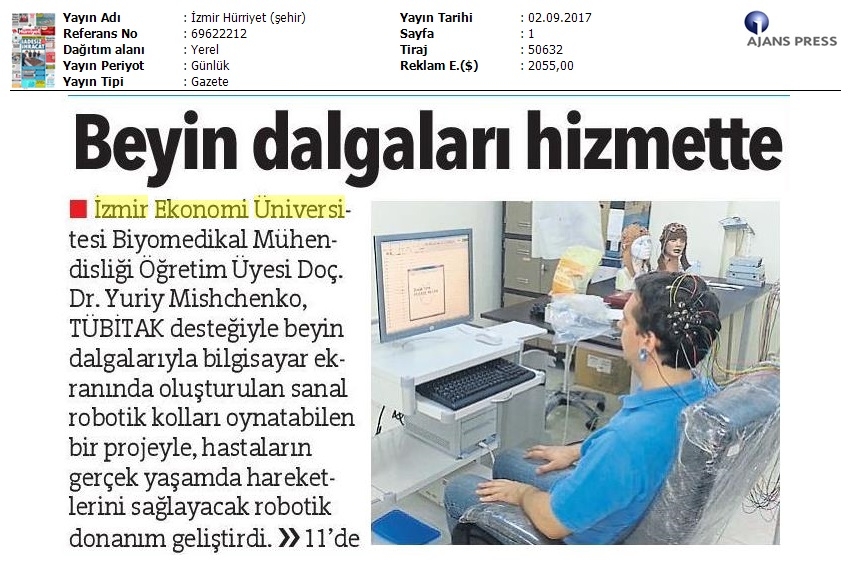

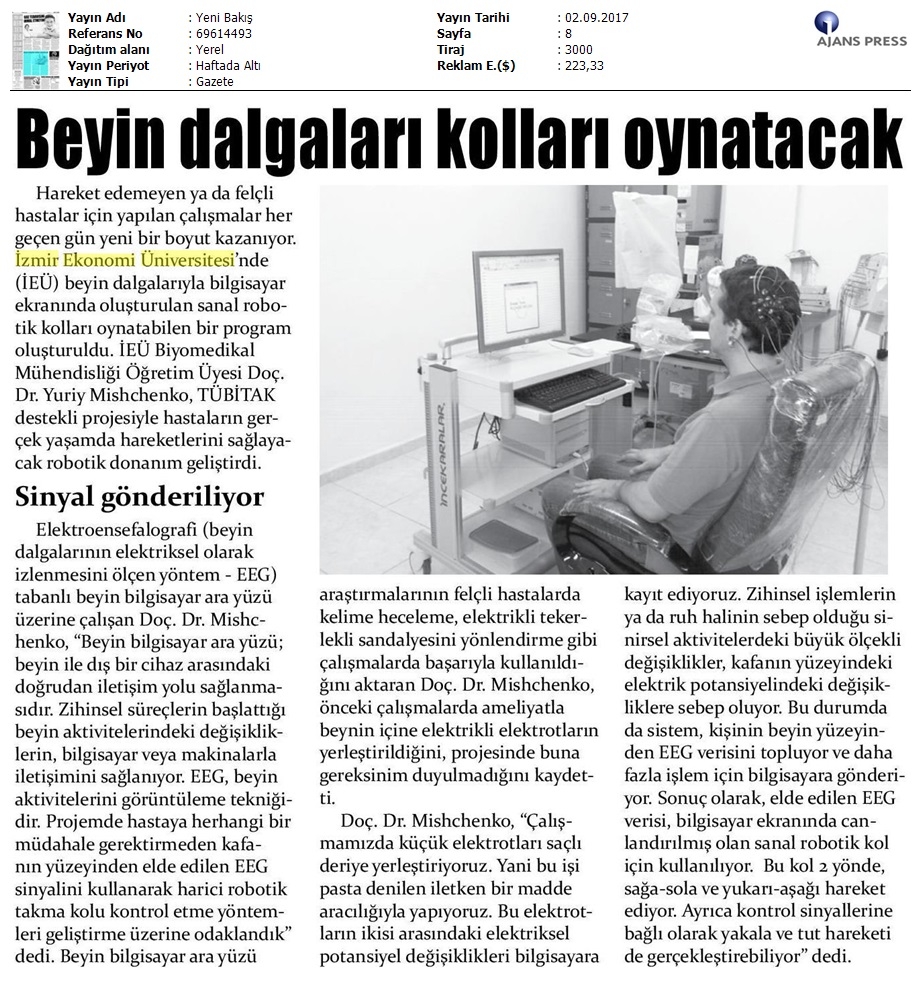

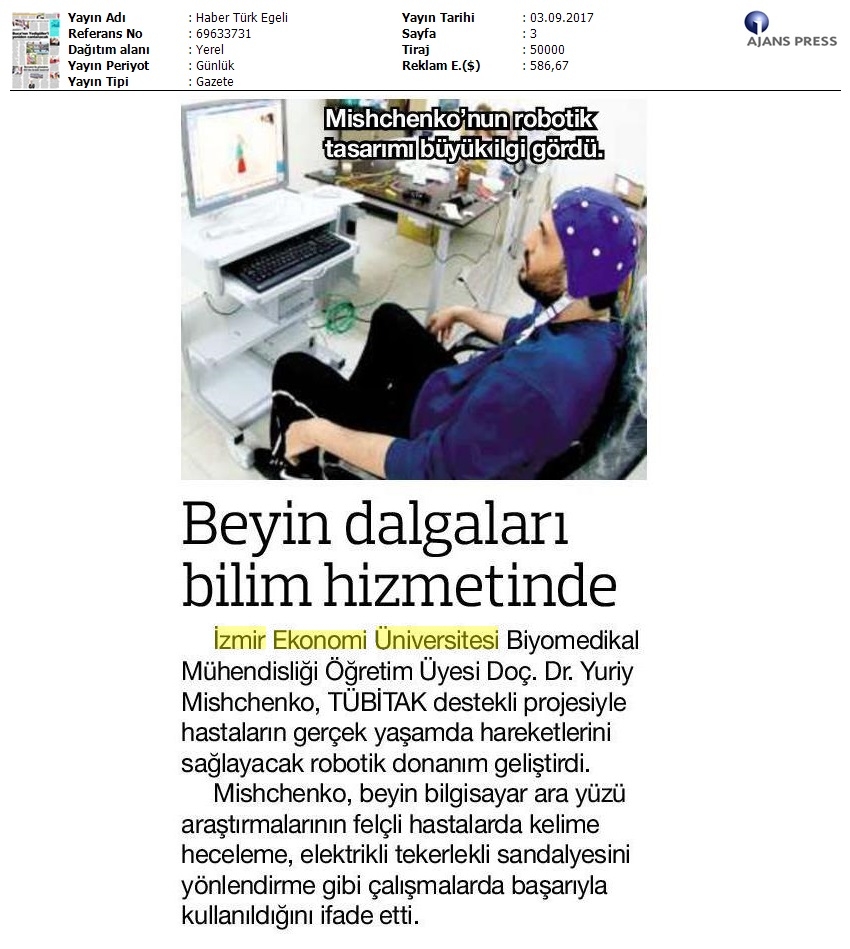

Assoc. Prof. Dr. Mishchenko, who studies electroencephalographic (electroencephalography or EEG is a brain activity imaging technique) brain computer interfaces, said, “Brain computer interfaces (BCI) is a potential direction in human-computer interaction technology allowing communications with computers or machines via changes in brain activity induced by mental processes. EEG is a brain activity imaging technique. My project focuses on development of methods for controlling an external robotic arm prosthetic by using EEG signal, acquired from the surface of the head noninvasively”.

BCI had been successfully used in the past in research settings to control a robotic manipulator and for word spelling by fully paralyzed patients, interfacing with computer software such as for emails or internet browsing, and directing a motorized wheelchair, reported Assoc. Prof. Dr. Mishchenko and he said that electrical electrodes had to be placed inside the brain via surgery in the past, but with his project they did not need to do this anymore. Assoc. Prof. Dr. Mishchenko said, “We attach small electrodes to the skin on the head by means of a conductive paste in our study and record the small changes in the distribution of electrical potential over the surface of the head continuously. Large-scale changes in neural activity caused by mental processes or changes in emotional state result in changes in such electrical potential on the surface of the head. In this case, the system collects the EEG data from the surface of a person’s brain and sends it to a computer for further processing. Thus, acquired EEG data is used to control a virtual robotic manipulator arm simulated on a computer screen. The arm can move in 2 directions – left and right, forward and backward – as well as perform a grab-and-hold movement based on the control signals provided by BCI user.”

‘6 different commands are taken’

Assoc. Prof. Dr. Mishchenko reported that imagining movement of left or right hand in their system was treated as a command for the computer to turn the simulated robotic arm left or right. Mishchenko stated the following:

“Similarly, left or right leg movement is used to signal computer to move the robotic arm forward or backward, and moving one’s tongue can be used to perform the grabbing motion. We performed a large number of original experiments in our laboratory with more than 10 different participants. When a person attempts to communicate a control signal to the computer, to perform related action, the system correctly responds 90-95% of the time. In case of six distinct control signals such as both hand, legs, tongue movements or remaining passive, our system could correctly detect the intention of person. The performance demonstrated so far establish important groundwork on which to build later, but showing that control based on electroencephalographic brain activity indeed can be achieved. This ground work will be used in the future to build more sophisticated software, that can use that control signal to perform smarter and more complex actions, as well as robotic hardware that will perform actions in real world to assist immobilized or paralyzed patients.”